We are so imperfect.

While most hate to admit it, we are all full of biases. This was brought to my attention in a very clear way by the shockingly honest blog post by Annie Pettit. As much as we might want to, we cannot change the way people are hard-wired. What can we, as qualitative researchers do? Well the main thing first, is to be aware that bias exists. Bias in research is something we try to avoid as it negatively impacts objectivity. Once we have awareness, we can then pay specific attention when we are developing discussion guides, moderating, conducting analysis and creating stories from our data.

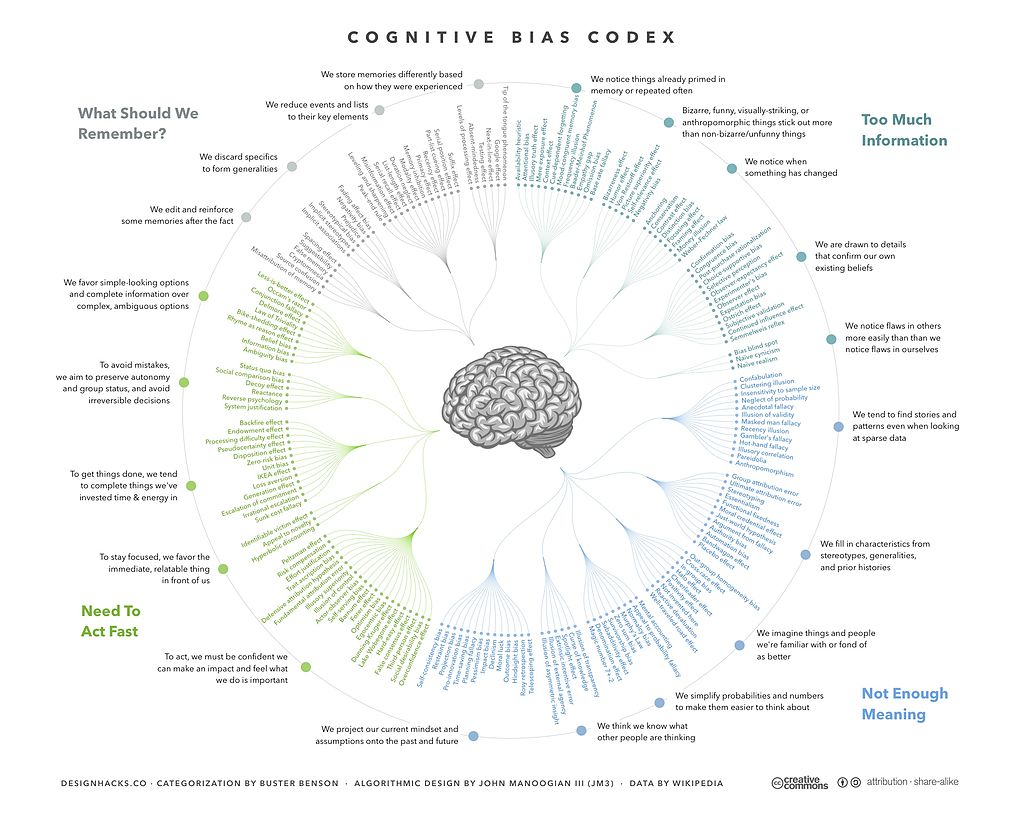

If we’re really keen we can print out the Cognitive Bias Codex to keep as a reference beside our desks, but that might be overkill.

Tips to address the imperfection.

Here are three types of bias along with some tips we follow at Upwords to minimize each. We seek out specific ways that online qualitative research can enable greater control over the bias.

1. Social Desirability Bias:

What is it? Participants answer in a way that they feel puts them in a more advantageous light. They might over-report positive behaviour and under-report negative behaviour.

What Upwords recommends:

These first two items apply to all qualitative research.

- Ensure participants know the moderator is not the expert we are learning about the topic from them and there are no right or wrong answers.

- Where possible, avoid direct questions (which can be answered simply with a few words). They are simply too easy to consciously or unconsciously ‘fake’.

These next items are particularly well suited to online qualitative discussions.

- Use projective techniques, especially but not always around sensitive issues. Asking participants to describe how a friend might react to a new concept can enlighten us on latent concerns they may not have mentioned previously in the discussion.

- Develop ‘tasks’ or activities that have participants show us what they do instead of telling us about it.

Some may argue that people are used to ‘posturing’ in their online worlds, therefore asking them to post photos and videos in research does not help with social desirability bias. At Upwords, we continue to see less socially desirable behaviour when they ‘show’ us and participants exhibit honestly when they are doing something that they know they shouldn’t be or that is contradictory. If you’re interested in more on this, Upwords is presenting on this topic at the MRIA conference on June 1.

2. Question Order Bias:

What is it? Participants become ‘primed’ by something they have seen previously in the discussion, or even in the screener.

What Upwords recommends:

These first four items apply to all qualitative research.

- Plan in advance. It is critical to think through the overall objectives and plan the screener, tasks and order of them consciously.

- Mask items that could prime a participant in the screener.

- Ask general questions before specific.

- Ask unaided questions before aided.

This last item applies specifically to online qualitative research.

- Where possible rotate concepts/ideas/randomize lists within segments.

This was a big change for me switching from moderating group discussions in person. In an in-person group we would rotate concepts between groups, but typically the groups had somewhat homogeneous specs (females, heavy users in one vs. female light users in another) etc. Being able to rotate within segments of an online discussion board is a great advantage to help minimize order bias of stimuli.

3. Confirmation Bias

What is it? Researcher and clients have a natural tendency to remember and interpret the findings from research in a way that supports what they already believe.

What Upwords recommends:

This first item applies to all qualitative research.

- Plan in advance. Holding a briefing meeting with clients to understand their hypotheses can help bring out preconceived ideas which helps the moderator know what they are looking to prove and/or

The following two items apply specifically to online qualitative research.

- Create tasks or Activities that push the limits. We once had a study where we believed lapsed users would talk negatively about the brand. After two days of not hearing what we thought we would hear, we confronted the hypothesis directly with a provocative task. We found participants actually stood up for the brand when that happened. The ensuing discussion helped uncover deeper insights around the root cause, which would not have surfaced without the time to reflect and adjust the guide.

- Code/tag/search transcripts to support both sides of the issue. It is easy during an in-person group discussion to lose track of how often an item was mentioned. Often clients and moderators can incorrectly recall an item that they are ‘listening for’ being more important than it was.

With online discussion boards we have an easier solution. We once had a client who thought they ‘heard’ that there was an issue with saltiness of the product (their ingoing fear) within our online discussion related to a product home use test. Searching transcripts, we quickly confirmed there were only two participants (out of 30) who brought up saltiness numerous times.

We mortals will remain perfectly imperfect. But, with a little bit of self-awareness and the right tools at our disposal we can continuously work to be conscious of biases, where to find them and how we can approach them to ensure our research is sound.